Resolve Merge Conflicts the Easy Way

Git is great at merging until it isn’t. Most of the time, when I rebase my feature branch against the main branch, it all goes to plan. Nothing to do for me. But when it doesn’t go to plan, it can be a big mess. Git dumps a wall of conflict markers on you. You resolve those, continue the rebase, and the next commit has conflicts too. Depending on the scope of changes, resolving merge conflicts can be a very tedious chore. The temptation to git rebase --abort and pretend this never happened is overwhelming.

One Year at PostHog

The last thing an engineer wants to see is their GitHub avatar next to the pull request that caused an outage.

Read Moretree-me: Because git worktrees shouldn't be a chore

I firmly believe that Git worktrees are one of the most underrated features of Git. I ignored them for years because I didn’t understand them or how to adapt them to my workflow.

Read MoreSpelungit: When `git log --grep` isn't enough

Supporting a large codebase is challenging. Sometimes, the questions you have can’t be answered by the code at hand. For example, “When did we switch from class components to hooks and what discussion led to that decision?” or “Did we used to have logging here for invalid keys?” or “Did we ever have code to handle Zstd compression?”

Read MoreCleaning up gone branches

A long time ago, I wrote a useful set of git aliases to support the GitHub flow. My favorite alias was bdone which would:

Using PostHog in your .NET applications

PostHog helps you build better products. It tracks what users do. It controls features in production. And now it works with .NET!

Read MoreNew Year, New Job

Last year I wrote a post, career chutes and ladders, where I proposed that a linear climb to the C-suite is not the only approach to a satisfying career. At the end of the post, I mentioned I was stepping off the ladder to take on an IC role.

Read MoreDeserializing JSON to a string or a value

I love using Refit to call web APIs in a nice type-safe manner. Sometimes though, APIs don’t want to cooperate with your strongly-typed hopes. For example, you might run into an API written by a hipster in a beanie, aka a dynamic-type enthusiast. I don’t say that pejoratively. Some of my closest friends write Python and Ruby.

Read MoreCareer Chutes and Ladder

The career ladder is a comforting fiction we’re sold as we embark on our careers: Start as Junior, climb to Senior, then Principal, Director, and VP. One day, you defeat the final boss and receive a key to the executive bathroom and and join the C-suite. You’ve made it!

Read MoreSupercharge your debugging with git bisect

Ever look for a recipe online only to scroll through a self-important rambling 10-page essay about a trip to Tuscany that inspired the author to create the recipe? Finally, after wearing out your mouse, trackpad, or Page Down key to scroll to the end, you get to the actual recipe. I hate those.

Read MoreCustom config sections using static virtual members in interfaces

C# 11 introduced a new feature - static virtual members in interfaces. The primary motivation for this feature is to support generic math algorithms. The mention of math might make some ignore this feature, but it turns out it can be useful in other scenarios.

Read More.NET Aspire vs Docker.

This is a follow-up to my previous post where I compared .NET Aspire to NuGet. In that post, I promised I would follow up with a comparison of using .NET Aspire to add a service dependency to a project versus using Docker. And looky here, I’m following through for once!

Read MoreIs .NET Aspire NuGet for Cloud Service Dependencies?

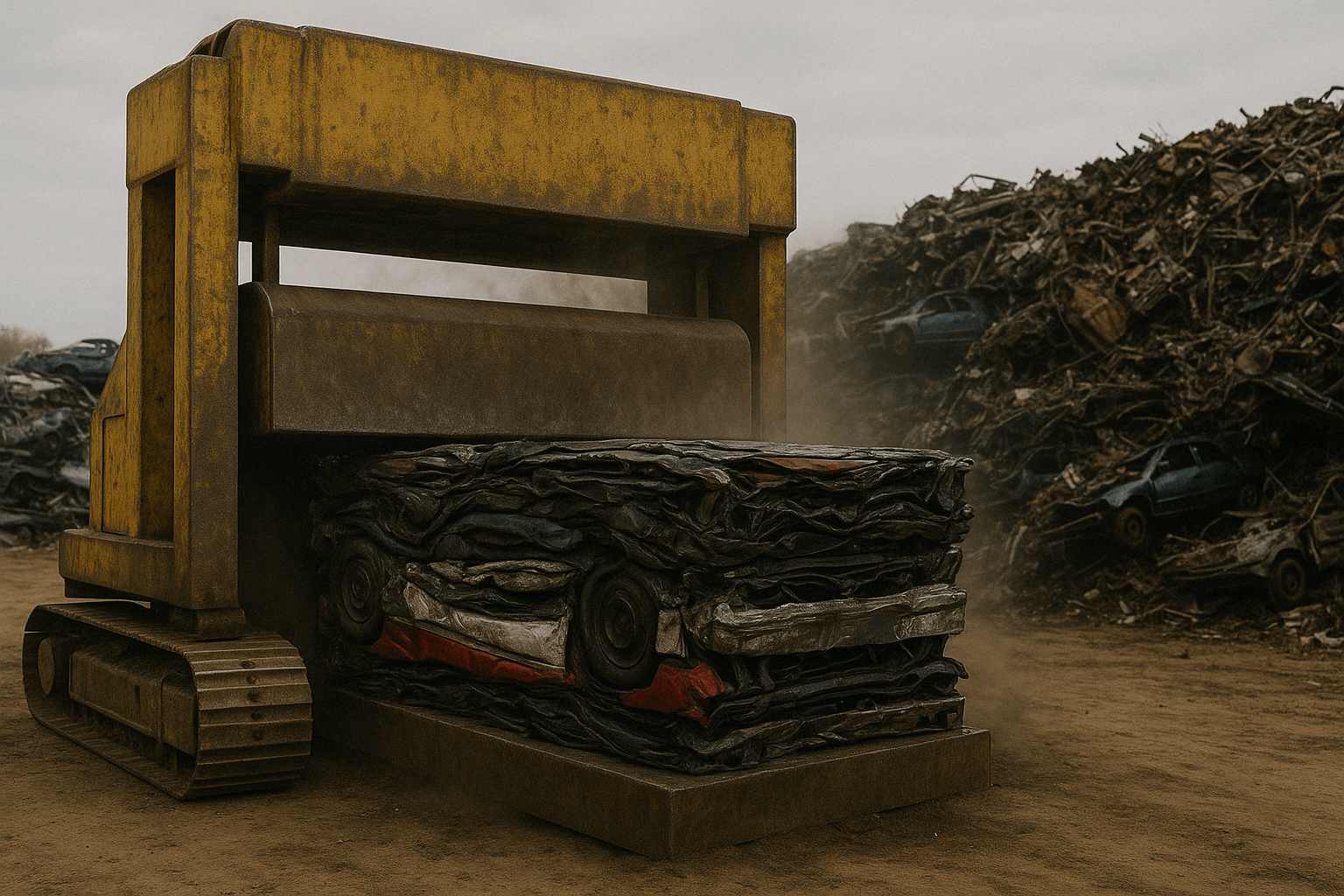

Failure suuuuucks

When you fail, many people will tell you how failure is a great teacher. And they’re not wrong. But you know what else is a great teacher? Success! And success is a lot less expensive than failure.

Read MoreCalling internal ctors in your unit tests

One of my pet peeves is when I’m using a .NET client library that uses internal constructors for its return type. For example, let’s take a look at the Azure.AI.OpenAI nuget package. Now, I don’t mean to single out this package, as this is a common practice. It just happens to be the one I’m using at the moment. It’s an otherwise lovely package. I’m sure the authors are lovely people.

When Your DbContext Has The Wrong Scope

This is the final installment of the adventures of Bill Maack the Hapless Developer (any similarity to me is purely coincidental and a result of pure random chance in an infinite universe). Follow along as Bill continues to improve the reliability of his ASP.NET Core and Entity Framework Core code. If you haven’t read the previous installments, you can find them here:

Read MoreWhy Did That Database Throw That Exception?

In the previous installment of the adventures of the hapless developer, Bill Maack, Bill faced some code that tries to recover from a race condition when creating a User if the User entity doesn’t already exist.

How to Recover from a DbUpdateException With EF Core

There are cases where recovery from an Entity Framework Core (EF Core) DbUpdateException is possible if you play your cards right. Play them wrong and the result is heartbreak and tears as every call to SaveChangesAsync rethrows the same exception.

C# List Pattern Examples

We recently upgraded Abbot to .NET 7 and C# 11 and I’m just loving the new language features in C#. In this post, I’ll give a couple examples of list patterns.

Read MoreSo you want to speak at conferences

I just finished speaking at my favorite conference, the Caribbean Developer’s Conference. Held in a wonderful resort in Punta Cana, Dominican Republic, it brings together a local and international crowd of speakers and attendees. I’ve gushed about it before.

Read More